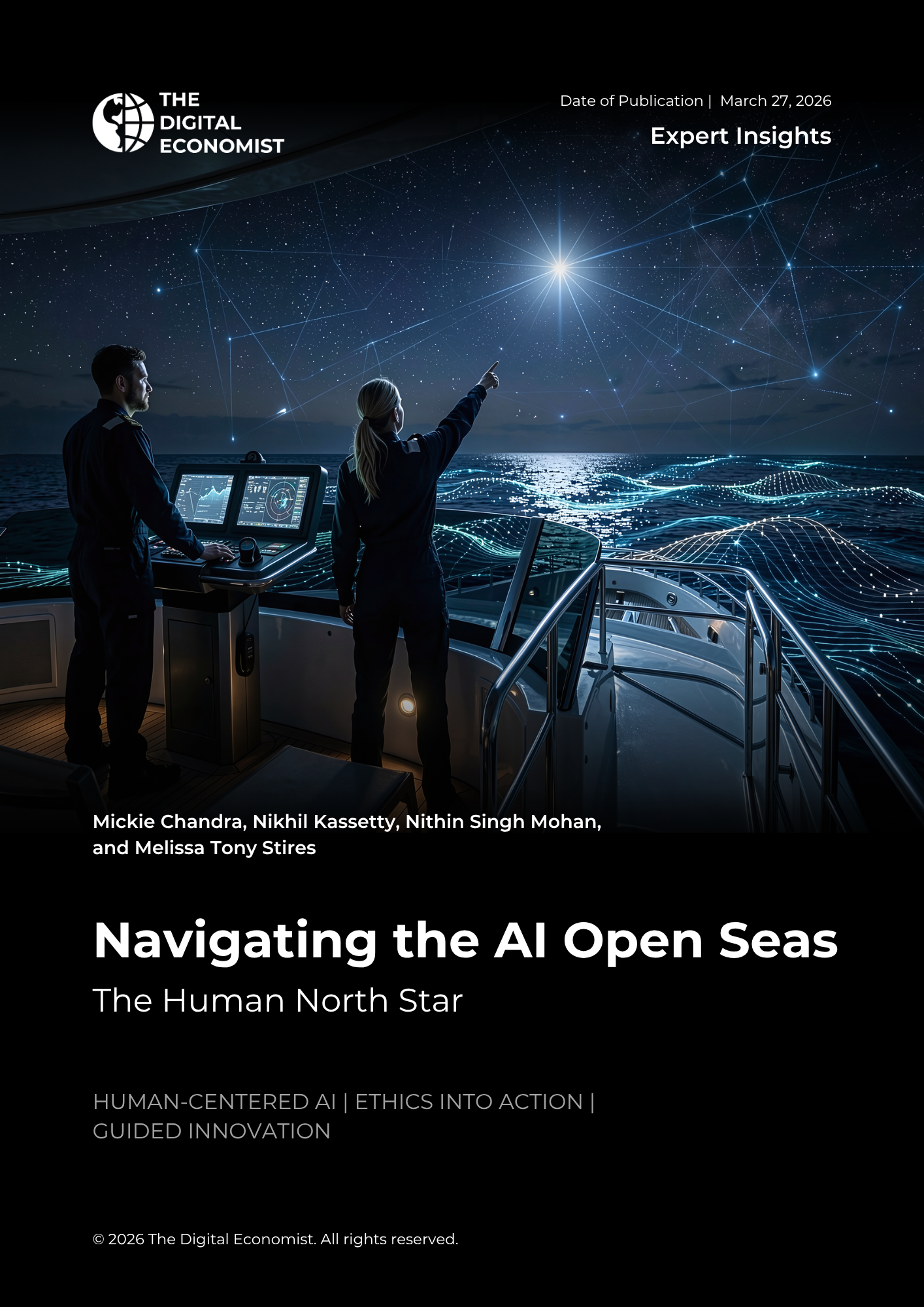

Artificial intelligence is advancing at an extraordinary speed—but the central challenge is not capability alone. It is how to ensure that innovation remains aligned with human values, rights, and well-being. Navigating the AI Open Seas: The Human North Star argues that AI should not be guided solely by efficiency or market momentum, but by a durable framework grounded in dignity, accountability, inclusion, safety, and human flourishing. Using the metaphor of navigation, the paper introduces six guiding questions that anchor a human-centered approach to AI: defining shared values, protecting inalienable rights, staying on course toward progress, safeguarding the vulnerable, aligning values across levels of society, and promoting long-term human flourishing.

Drawing on perspectives from technologists, policymakers, educators, and public-interest voices, the paper connects ethical principles to real-world governance. It outlines how values can be operationalized through institutional design, policy frameworks, and system-level guardrails, ensuring that AI development strengthens trust rather than erodes it. The analysis emphasizes that responsible AI requires not only technical safety but coordinated action across sectors, sustained public engagement, and alignment between individual, organizational, and societal priorities.

The paper concludes by presenting a practical framework for decision-makers tasked with shaping AI in a period of rapid transformation. It argues that the true measure of progress lies not in what AI systems can do, but in whether they improve human lives, expand opportunity, and reinforce the common good. In this sense, the “Human North Star” serves as both a conceptual anchor and a governance imperative—guiding the design, deployment, and oversight of AI toward outcomes that preserve trust, protect humanity, and enable shared prosperity.